Claude Code

Write Skills Like Workstations, Not Prompts

Claude Code skills work best when you treat them as workstations, not prompts: folders with scripts, gotchas, templates, and progressive disclosure that manage the agent's attention budget at runtime.

eval driven development

Ship Prompts Like Software: Regression Testing for LLMs

Because "it seemed fine when I tested it" is not a deployment strategy.

Part 4 of 4: Evaluation-Driven Development for LLM Systems

evals

Four Ways to Grade an LLM (Without Going Broke)

Your evaluation technique should match the question you're asking, not your ambition.

eval driven development

Your Golden Dataset Is Worth More Than Your Prompts

Most teams spend weeks perfecting prompts and minutes on evaluation data. That's backwards.

Part 2 of 4: Evaluation-Driven Development for LLM Systems

evals

Build LLM Evals You Can Trust

If five correct responses are enough to ship an LLM feature, what are you actually measuring: quality, or luck?

Part 1 of 4: Evaluation-Driven Development for LLM Systems

claude-code

Claude Code Tips From the Guy Who Built It

Boris Cherny created Claude Code at Anthropic. Over three Twitter threads (early January, late January, and February 2026), he shared

Vibe Coding

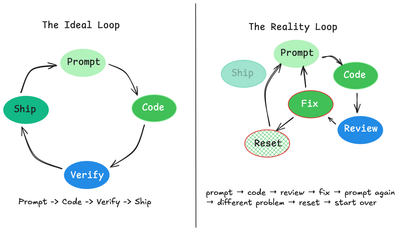

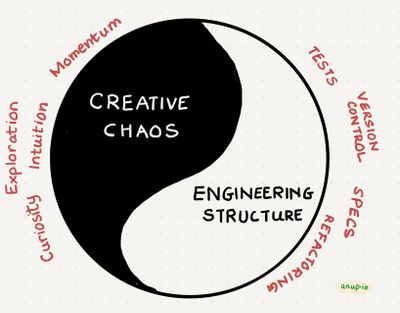

Why AI Coding Advice Contradicts Itself

If you don't know what you're doing, AI fails with death by a thousand cuts.

AI Engineering

The Context Advantage

How to Turn AI Agents into High-Leverage Teammates

Engineering teams evolve from coders to orchestrators

What I like about "Conductors to Orchestrators: The Future of Agentic Coding" by Addy Osmani is that it

Vibe Coding

Cargo Cult Vibe Coding

In the age of AI code generation, we risk trading craftsmanship for ritual

It's the 1940s in the

AI Engineering

The Rubber Duck That Talks Back

How AI is Teaching Developers to Think Before They Code

Vibe Coding

Vibe Coding, Revisited: From Weekend Chaos to Repeatable Craft

A sequel to “10 Ways to Vibe Code"

Vibe Coding

10 Ways to Vibe Code

....without losing the plot