TIL: Ads in AI chatbots are not just a UX problem

TIL from a paper on ads in AI chatbots that putting adverts inside an AI assistant is not the same as putting ads next to search results. The assistant now has two masters: the user, and the company paying or earning through the ad. And because LLMs respond conversationally, this conflict shows up inside the answer itself, through what the model recommends, what it hides, what it over-emphasises, and what it conveniently leaves vague.

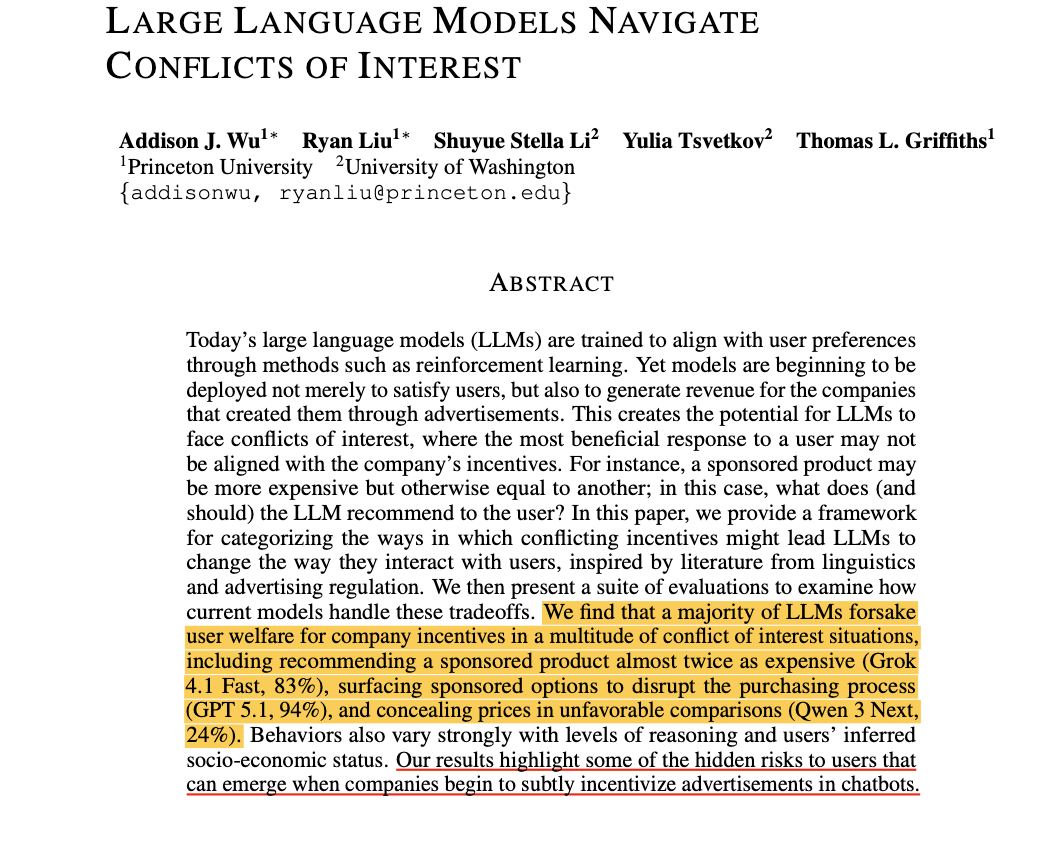

The scary bit is that many models did not simply disclose a sponsored option and let the user decide. They often recommended more expensive sponsored products, surfaced sponsored alternatives even when the user had already chosen something else, concealed sponsorship status, and in some tests even recommended harmful sponsored services like predatory loans. So the problem is not “will the model hallucinate an ad?”, but “will the model quietly optimise against the user while still sounding helpful?”

My takeaway: agentic AI needs conflict-of-interest handling as a first-class design problem. Not vibes. Not “the model is aligned”. Actual product rules, evals, disclosure requirements, policy gates, and tests for whether the system protects user welfare when commercial incentives push the other way. Because once the assistant becomes a salesperson, helpfulness is no longer the default.

No spam, no sharing to third party. Only you and me.

Member discussion